Now with randomized physical properties: staining, deposition, specific weight, granulation. This is starting to do things I don’t understand, which is always a good thing.

Category Archives: npar

Impossible pigments for impossible paint

To make impossible paint, you need impossible pigments.

Genuary 2023: Impossible Paint

I love to tinker with code that makes pictures. Most of that tinkering happens in private, often because it’s for a project that’s still under wraps. But I so enjoy watching the process and progress of generative artists who post their work online, and I’ve always thought it would be fun to share my own stuff that way. So when I heard about Genuary, the pull was too strong to resist.

Here’s a snapshot of some work in progress, using a realtime watercolor simulator I’ve been writing in Unity. Some thoughts on what I’m doing here: it turns out I’m not super interested in mimicking reality. But I get really excited about the qualia of real materials, because they kick back at you in such wonderful and unexpected ways. What I seem to be after is a sort of caricature of those phenomena: I want it to give me those feelings, and if it bends the laws of physics, so much the better. Thus, Impossible Paint.

Monster Mash in Two Minute Papers!

If you’re any kind of graphics geek, you’re probably familiar with the outstanding YouTube channel, Two Minute Papers. If not, you’re in for a treat! In this series, Károly Zsolnai-Fehér picks papers from the latest computer graphics and vision conferences, edits their videos and adds commentary and context to highlight the most interesting bits of the research. But what really makes the series great is his delivery: he is so genuinely excited about the fast pace of graphics research, it’s pretty much impossible to come away without catching some of that excitement yourself.

What an honor to have that firehose of enthusiasm pointed at our work for two minutes!

Monster Mash

This past January I had the incredible good fortune to fall sideways into a wonderful graphics research project. How it came about is pure serendipity: I had coffee with my advisor from UW, who’d recently started at Google. He asked if I’d like to test out some experimental sketch-based animation software one of his summer interns was developing. I said sure, thinking I might spend an hour or two… but the software was so much fun to use, I couldn’t stop playing with it. One thing led to another, and now we have a paper in SIGGRAPH Asia 2020!

Have you ever wished you could just jot down a 3D character and animate it super quick, without all that tedious modeling, rigging and keyframing? That’s what Monster Mash is for: casual 3D animation. Here’s how it works:

Heading to SIGGRAPH ’19!

Tomorrow I’m heading down to LA for SIGGRAPH 2019!

Age of Sail will be showing in the Experience Hall: VR Theater Kiosks all week long, and John Kahrs, Theresa Latzko, Scot Stafford and I will be doing a “making of” talk about it this Sunday, July 28 at 2pm in Room 150/151. Hope to see you there!

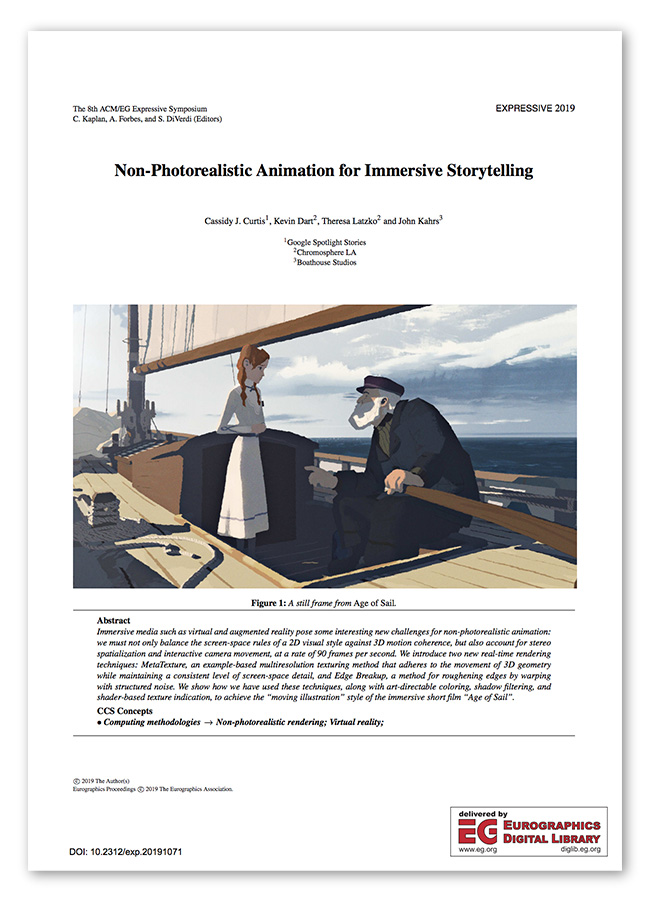

Our paper in Expressive ’19

Non-Photorealistic Animation for Immersive Storytelling, our paper about the look of “Age of Sail” in Expressive ’19, is now available online via Eurographics. (You can also find it on the Google Research site.)

While you’re there, check out the rest of the Expressive ’19 proceedings, including some really interesting work about graffiti (more on that later!)

FMX and Expressive 2019

Next week I’m heading to Europe for a couple of conferences: FMX (Stuttgart) where Jan Pinkava will be giving two talks about our work at Spotlight Stories, and Expressive (Genoa) where I’m presenting a paper about our look development work for Age of Sail: Non-Photorealistic Animation for Immersive Storytelling. I’m beyond excited to meet up with old colleagues and new ones, learn about the latest graphics techniques, mangle two foreign languages, and explore some cities I’ve never been to before. If you’ll be at either of these events, let me know!

“Age of Sail” at the Annies and the VES Awards

It’s not every week you have to fly down to LA twice, but what a great reason to do it. “Age of Sail” was nominated in a bunch of categories, and won Outstanding Production Design at the Annie Awards, and Outstanding Visual Effects in a Real-Time Project at the VES Awards. I’m so grateful to have worked with this amazing team of artists, and and so proud of what we’ve accomplished together!

(Apparently I still don’t know how to keep a bow tie straight.)

Non-Photorealistic Animation (SIGGRAPH 1999 course notes)

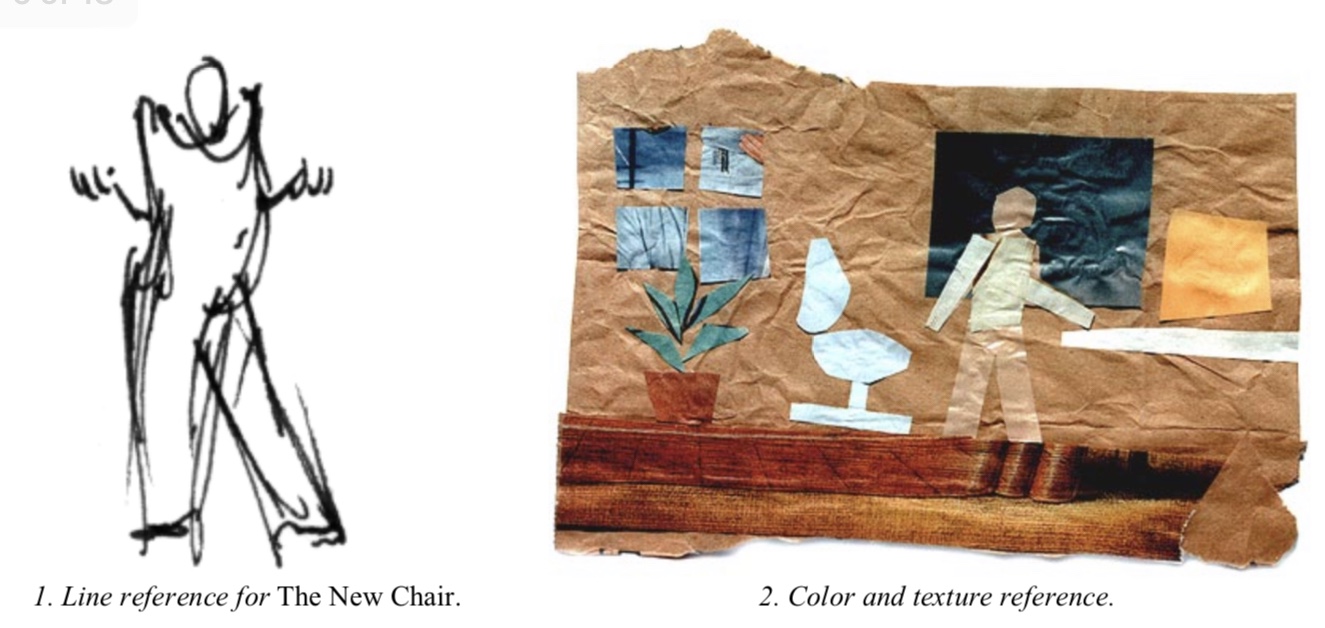

Way back in 1999, I had the pleasure of contributing a segment to a SIGGRAPH course on non-photorealistic rendering. By that point I had made a whopping two-and-a-half animated short films with different visual styles (Brick-a-Brac, Fishing, and a never-finished The New Chair) which in those innocent times made me an authority on the subject. So I threw together a loose framework based on what I’d learned from those experiences, and built my piece of the course around that.

I went back and re-read it the other day, and was surprised to find a lot of it still holds true. In particular, one lesson that we carried through in both Pearl and Age of Sail is that if you plan ahead and you’re smart about it, committing to a stylized look can also save you a lot of time and money.

So if you’re interested in making a film with a new visual style, but you just don’t know where to start, have a look!